Meet 17-year-old Nathan Chen, co-author of Kimi's 'impressive' work

Writer: Li Dan | Editor: Lin Qiuying | From: Original | Updated: 2026-03-18

Tesla and SpaceX's billionaire founder Elon Musk posted on his social media platform X on Monday that Kimi's work was "impressive," bringing a newly disclosed technical advancement by Chinese AI firm Moonshot into the spotlight.

Musk's X post has drawn attention to Kimi's "attention residues" method in large model's information transmission. File photos

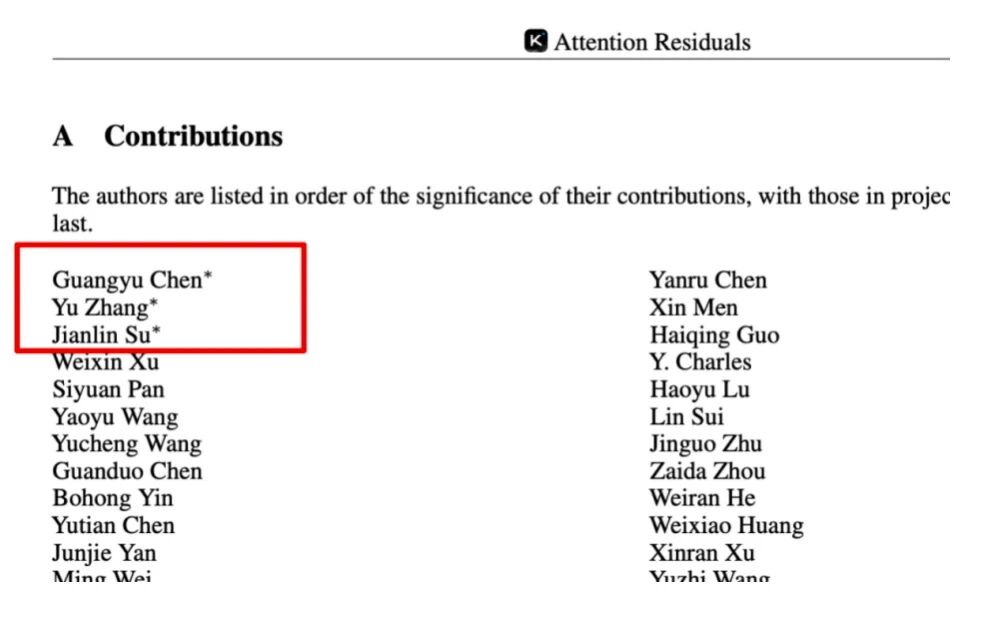

Nathan Chen Guangyu, a 17-year-old from Shenzhen who is currently a 12th-grader at the Basis International School Park Lane Harbor, immediately caught public attention for appearing at the top of the authors list on the paper published the same day.

Nathan Chen appears at the top of the authors list on the paper.

Zhang Yu, a key researcher on Kimi's architecture efficiency, and Su Jianlin, a renowned large language model researcher, are listed alongside Chen as first authors with “equal contribution” to the research. Su previously proposed Rotary Position Embedding (RoPE), a widely adopted position encoding method in mainstream large models.

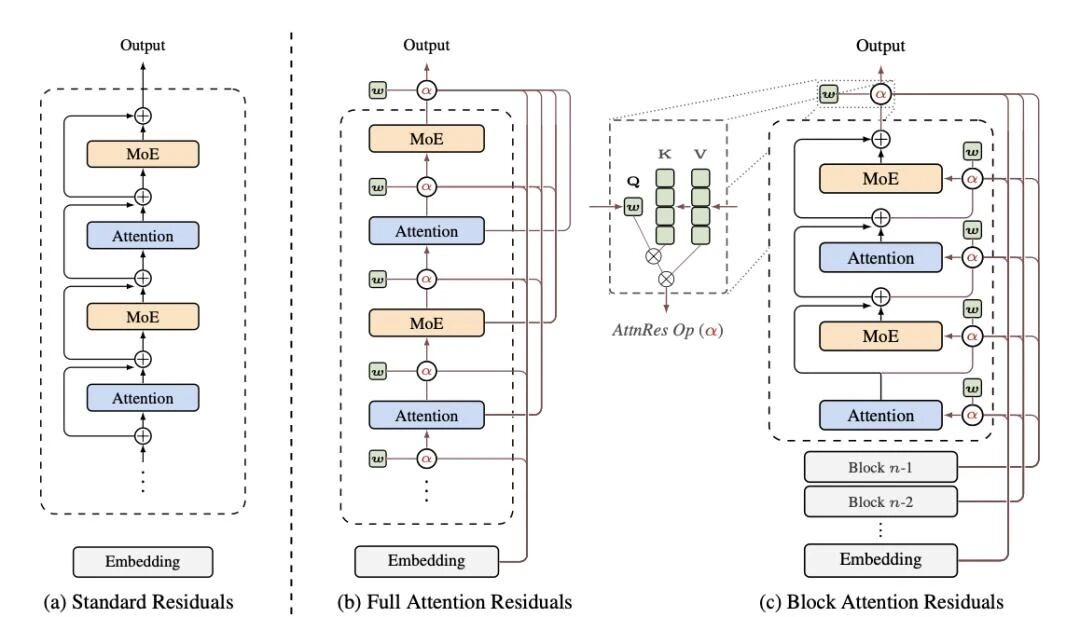

Most of today's large models are built on the Transformer architecture that appeared in 2017 and subsequently set off a rapid round of generative AI boom. It changed how information is processed within the text, but information has been passed on layer after layer indiscriminately following a method called "residual connection." Simply put, after computing each layer, the information from the previous layer is directly added to the next one. It is simple and effective, but as the number of layers adds up, truly important information can be diluted by the continuously accumulating content.

This image compares the standard residuals vs. the attention residuals connection.

Researchers, including OpenAI co-founder Ilya Sutskever, have pondered whether this method could be reformed.

The new "attention residuals" method proposed by the Kimi team addresses this problem. Instead of having each layer indiscriminately receive information from all previous layers, the current layer selectively chooses more valuable content to reference and then aggregates it.

The significance of this work lies in offering an alternative path: enhancing large model capabilities doesn’t necessarily rely solely on stacking parameters and computing power; it can also start with the underlying structure to improve information processing efficiency.

Nathan Chen's X account is followed by a16z founder Marc Andreessen and other influential figures.

This method has been validated on the Kimi Linear 48B model, achieving a reduction in training computation of about 20% while maintaining similar effectiveness. This is equivalent to approximately 1.25 times efficiency of the traditional structure, with less than a 2% increase in inference latency. What's more, the new method can directly replace standard residual connections without the need to adjust other aspects of the model. With this new direction, model designers might refocus on the path of increasing depth, rather than continuing to expand parameter scale.

Chen's deep engagement with AI research only began in the past year. Under the guidance of DeepSeek researcher Yuan Jingyang, Chen quickly built his foundational knowledge by studying classic papers and tracking open-source projects on GitHub.

Later, his reflections on technical blog posts shared on social media caught the attention of a CEO from a Silicon Valley AI startup, and after passing a timed test, he secured an internship offer last year. During the summer vacation, he interned at the company for seven weeks, and after returning to China, he joined the Kimi team as an intern last November.

While the public may be fascinated by the story of another tech prodigy shooting to fame, Chen neared his goal step by step, putting in hard efforts and demonstrating resilience.

Intrigued by our era's fast-expanding technology, Chen honed his interest into capability, built connections in the field, bounced his ideas among the smartest minds, and finally used his capability to solve a real-world challenge in large models.